A part of the IVA 2020 Conference

18th October 2020

11:00 – 16:00, UK BST

The rise of consumer Virtual Reality systems has raised the public profile of movement based interaction techniques. We can interact with VR using gestures and other large scale movements that are not possible using a mouse or touchscreen. The IVA research community has worked with this kind of full body interaction for decades, particularly the use of body language to interact with virtual agents. This kind of immersive interaction is therefore an excellent application of IVAs and IVAs are likely to become mainstream in VR in the near future.

In IVA research we often aim to accurately recognise participants’ natural body language and have agents respond to this. While this is an important research aim, in practical applications, recognition errors often mean that the range of gestures and movements needs to be constrained. As Höök (2018) points out, movement interaction designs are not “natural”, they are designed to seem natural. Interactive Machine Learning is a promising approach for designing movement interaction because it allows developers to capture and implement complex movements in their applications by simply performing them. This workshop will investigate how these approaches to interaction design for body movement can be applied to interaction with IVAs, particularly to non-verbal communication with IVAs.

This will be a hands-on workshop separated into two parts. The morning session will be available to all. We introduce a new interactive machine learning tool, InteractML and accompanying movement ideation method being developed to make movement interaction design faster, adaptable and accessible to creators of varying experience and backgrounds. Attendees will collaborate using our own body movements to explore new ways of interacting with IVAs and discover technologies allowing rapid implementation of these designs.

For those attendees with access to a VR set-up, the afternoon will see attendees create basic implementations of IVA interaction techniques. Giving attendees the opportunity to implement their own interaction design in our prototyping session.

This workshop is being delivered as part of a project funded by the UK Engineering and Physical Science Research Council, 4i: Immersive Interaction Design for Indie developers using Interactive machine learning. It will be the first application of the techniques developed in the project, but as long term researchers on Virtual Agents, we see IVAs not merely as an excellent application of Immersive Interaction Design but as its holy grail.

Activities

Introduction

This workshop presents a project developing a new immersive tool that uses interactive machine learning to recognise and implement complex movement interaction designs. We will introduce our project activities, team, ethos and research so far.

Embodied Sketching for movement interaction with IVAs exercise

We will run an interactive session using our embodied ideation technique based on embodied sketching: designing movement interaction through movement (Márquez Segura et al. 2016). Rather than designing on paper, we will collaborate using our own body movements to explore new ways of interacting. New ways of interacting will be developed through physical “body storming and “sketched” with our bodies, by acting them out.

Interactive Machine Learning Presentation and InteractML Demo

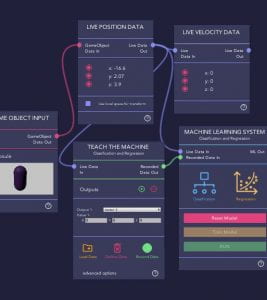

Interactive Machine Learning uses machine learning technologies within an iterative design process that allows for rapid prototyping and refinement. This makes it possible to design and implement movement interaction by performing body movements. We will present our software toolkit, InteractML, that we are developing to support interactive machine learning and movement interaction design in Unity3D (Gonzalez Diaz et al. 2019).

Prototype Building with support from the team on our Discord server

In the afternoon the workshop will be in the form of a hands-on prototype building session where you will have the opportunity to work with a special Unity project that is set-up to use InteractML to design movement interaction with IVAs. With full support from the team on our dedicated Discord server, you will have the opportunity to implement your movement interaction designs using our InteractML tool. To attend this session, please see the prerequisites below.

Reflection and group discussion: How does Movement Interaction apply to IVAs?

The workshop will end with an opportunity to reflect on the approach and discuss its application to IVAs. Participants who would like to continue with this approach will then be given access to our tools and long term support.

Schedule

11:00: Introduction and Embodied Sketching for movement interaction with IVAs exercise

11:30: Interactive Machine Learning Presentation and InteractML Demo with Q&A

12:15: Lunch

13:00: Prototype Building with support on Discord

14:45: Break

15:00: Project Presentations

15:30: Reflection and group discussion: How does Movement Interaction apply to IVAs?

16:00: Finish

Prototype building session prerequisites

- Access to a cabled VR HMD and the computing capabilities to support VR interaction (an Oculus Quest is possible if there is a link cable set up and tested before).

- Unity 2019.4.11f1 installed with experience of Unity software at at least beginner level.

Registration

Workshop registration is now open! Please register here.

Please specify if you are attending all parts of the workshop or just the morning session. Once you have registered we will forward links to join the sessions. If you are joining for the afternoon prototype session, instructions on how to set-up the example Unity project will be sent prior to the session.

Questions?

Email Nicola Plant n.plant@gold.ac.uk

Organisers

Marco Gillies is a Reader in Computing at Goldsmiths, University of London. He has over 20 years of experience in research on both Intelligent Virtual Agents and Virtual Reality. His work centres on the application of machine learning to recognising and generating non-verbal communication behaviour in immersive contexts.

Nicola Plant is a new media artist, researcher and developer currently working as a qualitative researcher on the 4i project at Goldsmiths, University of London. Nicola holds a Ph.D in Computer Science that focuses on embodiment, non-verbal communication and expression in human interaction from Queen Mary University of London. She has an artistic practice that specialises in movement based interactivity and motion capture, she creates interactive artworks exploring expressive movement within VR.

Clarice Hilton is the lead developer on the 4i project at Goldsmiths, University of London. She has previously worked as a Creative Technologist, Artist and Researcher in immersive technology. In her interdisciplinary practice she collaborates with filmmakers, dance practitioners, theatre makers and other artists to explore participatory and embodied experiences.

References

Gillies, M. (2019) ‘Understanding the Role of Interactive Machine Learning in Movement Interaction Design’, ACM Transactions on Computer-Human Interaction, 26(1), pp. 1–34. doi: 10.1145/3287307.

Gonzalez Diaz, C., Perry, P. and Fiebrink, R. (2019) ‘Interactive Machine Learning for More Expressive Game Interactions’, Proceedings of the IEEE Conference on Games. London, UK.

Höök, K. (2018) ‘Designing with the Body – Somaesthetic Interaction Design’, in. MIT Press, doi: 10.5753/ihc.2018.4168.

Márquez Segura, E. et al. (2016) ‘Embodied Sketching’, in Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, pp. 6014–6027. doi: 10.1145/2858036.2858486.